Archive

The power of negative thinking: Combinatorial and geometric inequalities

It’s been awhile since I blogged about mathematics. You know why, of course — there are so many issues in the real world, the imaginary world is just not as relevant as it used to be. Well, at least that’s how I felt until now. But the latest paper we wrote with Swee Hong Chan was so much fun (and took so much effort), the wait is over. There is also some interesting backstory before we can state the result.

What is the inverse problem in Enumerative Combinatorics?

Before focusing on combinatorics, note that inverse problems are everywhere in mathematics. Sometimes they are obvious and stated as such, and sometimes we are so used to these problems we don’t think of them as inverse problems at all. You are probably thinking of major problems (both solved and unsolved), like the inverse Galois problem, Cauchy problem, Minkowski problem or the Alexandrov existence theorem. But really, even prime factorization, integration, taking logs and subtraction can be viewed this way. As I said — they are everywhere.

In Enumerative Combinatorics, a typical problem goes like this: given some set A, find the number N:=|A|. Finding a combinatorial interpretation is an inverse problem: given N, find A such that N=|A|. This might seem silly to an untrained eye: obviously, every nonnegative integer counts something. But it is completely normal to have constraints on the type of solution that you want — this case is no different.

Indeed, if you think about it, the direct problem is not all that well-defined either. For example, do you want an asymptotics or just some kind of bounds on N? Or maybe you want a closed formula? But what is a closed formula? Does it have to be a product formula, or some kind of summation will work? Can it be a multisum with both positive and negative terms? Or maybe you are ok with a closed formula for the generating function in case A=UAn? But what exactly is a closed formula for a GF? The list of questions goes on.

Five years ago, I discussed various different answers to these question in my ICM paper, with ideas goes back to Wilf’s beautiful paper (see also Stanley’s answer). If anything, the answers are not short and sometimes technical. Although my formulations are well-defined, positive results can be hard to prove, while negative results can be really hard to prove. Such is life, I suppose.

So what exactly is a combinatorial interpretation?

It is easy to go philosophical (as Rota does or I do on somewhat broader questions), but let’s focus on math here. I started thinking about the problem when I came to UCLA over twelve years ago, and struggled to find a good answer. I discussed the problem in my Notices paper when I finally made peace with the computational complexity approach. Of the multiple definitions, there is only one that is both convincing, workable and broad enough:

Combinatorial interpretation = #P

I explain the answer in my lengthy OPAC survey on the subject, and in my somewhat entertaining OPAC talk (slides). I have miles to say about this, maybe some other time.

To understand why I case, it’s worth thinking of the origin of the problem. Say, you have an inequality a ≥ b between number of certain combinatorial objects, where a=|A|, b=|B|. If you have a nice explicit injection φ : B → A, this gives a combinatorial interpretation for the defect (a–b) as the number of elements in A without a preimage. If φ and its inverse are computable in polynomial time, this shows that (a–b) counts the number of objects which can be certified to be correct in polynomial time. Thus, the definition of #P.

Now, as always happens in these cases, the reason for the definition is not to give a positive answer (“you know it when you see it” was a guiding principle for a long time), but to give a negative answer. What if many of these combinatorial interpretation problems Stanley discusses in his famous survey simply don’t have a solution? (see my OPAC survey linked above, and this MO discussion for the state of art).

To list my favorite open problem, do Kronecker coefficients g(λ,μ,ν) have a combinatorial interpretation? I don’t believe so, but to give a negative answer we need a definition. There is just no way around it. Note that we already have g(λ,μ,ν)= a(λ,μ,ν) – b(λ,μ,ν) for some numbers of combinatorial objects a and b (formally, these are #P functions). It is the injection that doesn’t seem to work. But why not?

Unfortunately, the universe of “not in #P” results is very small and includes only this FOCS paper with Christian Ikenmeyer and this SODA paper with Christian Ikenmeyer and Greta Panova. Simply put, such results are rare and hard to prove. Let me not explain them, but rather turn in the direction of my current work.

Poset inequalities

Since the inequalities like g(λ,μ,ν) ≥ 0 are so unapproachable in full generality, some four years ago I turned to inequalities on the number of linear extensions of finite posets. Many such inequalities are known in the literature, e.g. the XYZ inequality, the Sidorenko inequality, the Björner–Wachs inequality, etc. It is unclear whether the defect of the XYZ inequality has a combinatorial interpretation, but the other two certainly do (see our “Effective poset inequalities” paper with Swee Hong Chan and Greta Panova).

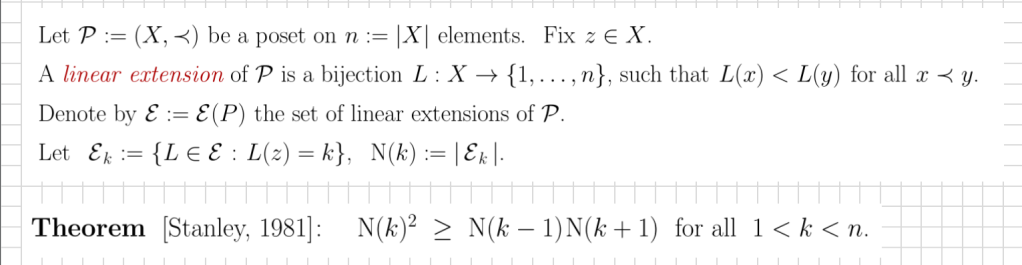

What we found most interesting and challenging, is the following remarkable Stanley’s inequality on the log-concavity of the number of certain linear extensions:

(this is a slide from my 2021 talk). In a remarkable breakthrough, Stanley resolved the Chung-Fishburn-Graham conjecture using the Alexandrov–Fenchel inequality (more on this later). What I was interesting in the following problem: Is the defect of Stanley’s inequality N(k)^2-N(k-1) N(k+1) in #P? This is still an open problem, and we don’t have tools to resolve it.

It gets worse: in an effort to show that this inequality is in #P, two years ago we introduced a whole new technology of combinatorial atlas. We used this technology to prove a lot new inequalities in this paper with Swee Hong Chan, including multivariate extensions of Stanley inequalities and correlation inequalities. We now know why this technology was never going to apply to the #P problem, but that’s all yet another story.

What we did in our new paper is attacked a similar problem for the generalized Stanley inequality, which has the same statement but with additional constraints that L(xi)=ci for all 1 ≤ i ≤ m, where xi are fixed poset elements and ci are fixed integers. Stanley derived the log-concavity of these more general numbers from the AF inequality in one big swoosh. In our paper, we prove:

Corollary 1.5. The defect of the generalized Stanley inequality is not in #P, for all m ≥ 2 (unless PH collapses to a finite level).

Curiously, in addition to a lot of poset theoretic technology we are using the Yao-Knuth theorem in number theory. Our main result is stronger:

Theorem 1.3. The equality cases of the generalized Stanley inequality are not in PH, for all m ≥ 2 (unless PH collapses to a finite level).

Clearly, if the defect was in #P, then the “defect =? 0″ is in coNP, and the “not in #P” result follows. The complexity theoretic idea of the proof is distilled in our companion paper where we explain why the coincidence problem for domino tilings in R3 is not in PH, and the same holds for many other hard combinatorial problems.

This underscores both the strength and the weakness of our approach. On the one hand, we prove a stronger result than we wanted. On the other hand, for m=0 it is known that the equality cases of the generalized Stanley inequality are in P. This is a remarkable result of Shenfeld and van Handel (actually, a consequence of the their remarkable theory). In fact, we reprove and generalize the result in our combinatorial atlas paper. In the new paper, we prove the m=1 version of this result, using a (also remarkable) followup paper by Ma and Shenfeld. We conjecture that m=2, the defect is already not in #P (Conjecture 10.2), but there seem to be difficult number theoretic obstacles to the proof.

In summary, we now know for sure that the defect of the generalized Stanley inequality does not have a combinatorial interpretation. In particular, there is no direct injective proof similar to that for the Sidorenko inequality, for example (cf. this old blog post). If you are deeply engaged with the subject (and why would you be, obviously?), you are happy. But if not — you probably shrug. Let me now explain why you should still care.

Geometric inequalities

It is rare when when you can honestly say this, but the geometric inequalities really do go back to antiquity (see e.g. here and there), when the isoperimetric inequality in the plane was first discovered. Of the numerous inequalities that followed, note the Brunn–Minkowski inequality and the Minkowski quadratic inequality (MQI) for three convex bodies in R3. These are all consequences of the Alexandrov–Fenchel inequality mentioned above. However, when it comes to equality conditions there is a bit of wrinkle.

For the isoperimetric inequality in the plane, the equality cases are obvious (discs), and there is an interesting history of proofs by symmetrization. For the BM inequality, the equality cases are homothetic convex bodies, but the proof is very far from obvious and requires the mixed volume machinery. For the MQI, the equality conditions were know only in some special cases, and resolved in full generality only recently by Shenfeld and van Handel.

For the AF inequality, the effort to understand the equality conditions goes back to A. D. Alexandrov, who found equality conditions in some cases:

Serious difficulties occur in determining the conditions for equality to hold in the general inequalities just derived. [Alexandrov, 1937]

In 1985, Rolf Schneider formulated a workable conjecture on the equality conditions, which remains out of reach in full generality. He made a strong case for the importance of the problem:

As [AF inequality] represents a classical inequality of fundamental importance and with many applications, the identification of the equality cases is a problem of intrinsic geometric interest. Without its solution, the Brunn–Minkowski theory of mixed volumes remains in an uncompleted state. [Schneider, 1994]

In the remarkable paper mentioned above, Shenfeld and van Handel resolved several special cases of the conjecture. Notably, they gave a complete characterization of the equality conditions for convex polytopes, in a sense of extracting all geometry from the problem, and stating the condition in terms of equality of certain mixed volumes. This is where we come in.

Equality cases of the AF inequality are not in PH

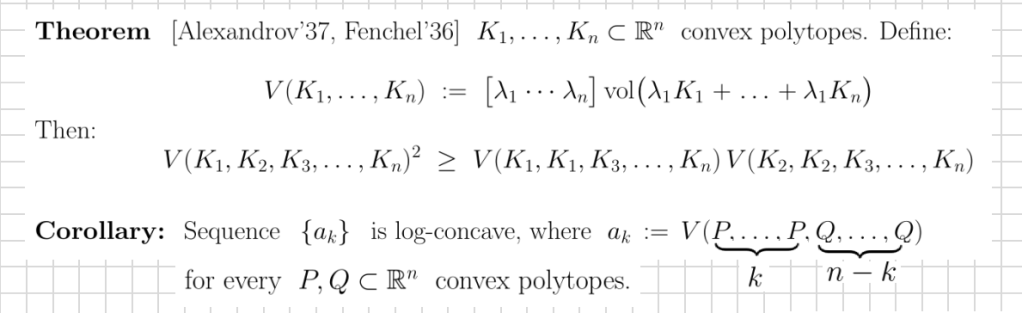

To understand the way Stanley derived his inequality from the AF inequality, it’s worth first explaining the connection to log-concavity:

Stanley considered sections P, Q of the order polytope associated with a given poset and concluded log-concavity for the numbers N(k) via a simple calculation.

Now, our “not in PH” theorem on the equality cases of Stanley’s inequality and this Stanley’s calculation imply that equality cases of the AF inequality are also not in PH (under the same complexity assumptions plus computational setup on how the polytopes are presented). In some sense, this says that the equality cases of the AF inequality can never be fully described, or at least the description by Shenfeld and van Handel is probably the best one can do.

In the spirit of the #P application, our result also implies, that there is unlikely to be a stability result for the AF inequality in full generality (in this sense), see Corollary 1.2 in the paper. Omitting precise statements and technicalities, let us only mention that Bonnesen’s inequality is a basic stability result which can be viewed as a sharp extension of the isoperimetric inequality, including the equality conditions. What we are saying is — don’t expect to ever see anything like that for the AF inequality (see the paper for details).

UPDATE (Feb. 7, 2024). The “m ≥ 6” was later improved to “m ≥ 2“, see our paper on the arXiv. See this video of my Oberwolfach talk on the subject. See also this blog post by Gil Kalai. Note: This paper was accepted to appear at STOC 2024.

The journal hall of shame

As you all know, my field is Combinatorics. I care about it. I blog about it endlessly. I want to see it blossom. I am happy to see it accepted by the broad mathematical community. It’s a joy to see it represented at (most) top universities and recognized with major awards. It’s all mostly good.

Of course, not everyone is on board. This is normal. Changing views is hard. Some people and institutions continue insisting that Combinatorics is mostly a trivial nonsense (or at least large parts of it). This is an old fight best not rehashed again.

What I thought I would do is highlight a few journals which are particularly hostile to Combinatorics. I also make some comments below.

Hall of shame

The list below is in alphabetical order and includes only general math journals.

(1) American Journal of Mathematics

The journal had a barely mediocre record of publishing in Combinatorics until 2008 (10 papers out of 6544, less than one per 12 years of existence, mostly in the years just before 2008). But then something snapped. Zero Combinatorics papers since 2009. What happened??

The journal keeps publishing in other areas, obviously. Since 2009 it published the total of 696 papers. And yet not a single Combinatorics paper was deemed good enough. Really? Some 10 years ago while writing this blog post I emailed the AJM Editor Christopher Sogge asking if the journal has a policy or an internal bias against the area. The editorial coordinator replied:

I spoke to an editor: the AJM does not have any bias against combinatorics. [2013]

You could’ve fooled me… Maybe start by admitting you have a problem.

(2) Cambridge Journal of Mathematics

This is a relative newcomer, established just ten years ago in 2013. CJM claims to:

publish papers of the highest quality, spanning the range of mathematics with an emphasis on pure mathematics.

Out of the 93 papers to date, it has published precisely Zero papers in Combinatorics. Yes, in Cambridge, MA which has the most active combinatorics seminar that I know (and used to co-organize twice a week). Perhaps, Combinatorics is not “pure” enough or simply lacks “papers of highest quality”.

Curiously, Jacob Fox is one of the seven “Associate Editors”. This makes me wonder about the CJM editorial policy, as in can any editor accept any paper they wish or the decision has to made by a majority of editors? Or, perhaps, each paper is accepted only by a unanimous vote? And how many Combinatorics papers were provisionally accepted only to be rejected by such a vote of the editorial board? Most likely, we will never know the answers…

The journal also had a mediocre record in Combinatorics until 2006 (12 papers out of 2661). None among the last 1172 papers (since 2007). Oh, my… I wrote in this blog post that at least the journal is honest about Combinatorics being low priority. But I think it still has no excuse. Read the following sentence on their front page:

Papers on other topics are welcome if they are of broad interest.

So, what happened in 2007? Papers in Combinatorics suddenly lost broad interest? Quanta Magazine must be really confused by this all…

(4) Publications Mathématiques de l’IHÉS

Very selective. Naturally. Zero papers in Combinatorics. Yes, since 1959 they published the grand total of 528 papers. No Combinatorics papers made the cut. I had a very limited interaction with the journal when I submitted my paper which was rejected immediately. Here is what I got:

Unfortunately, the journal has such a severe backlog that we decided at the last meeting of the editorial board not to take any new submissions for the next few months, except possibly for the solution of a major open problem. Because of this I prefer to reject you paper right now. I am sorry that your paper arrived during that period. [2015]

I am guessing the editor (very far from my area) assumed that the open problem that I resolved in that paper could not possibly be “major” enough. Because it’s in Combinatorics, you see… But whatever, let’s get back to ZERO. Really? In the past 50 years Paris has been a major research center in my area, one of the best places to do Enumerative, Asymptotics and Algebraic Combinatorics. And none of that work was deemed worthy by this venerable journal??

Note: I used this link for a quick guide to top journals. It’s biased, but really any other ranking would work just as well. I used the MathSciNet to determine whether papers are in Combinatorics (search for MSC Primary = 05)

How should we understand this?

It’s all about making an effort. Some leading general journals like Acta, Advances, Annals, Duke, Inventiones, JAMS, JEMS, Math. Ann., Math. Z., etc. found a way to attract and publish Combinatorics papers. Mind you they publish very few papers in the area, but whatever biases they have, they apparently want to make sure combinatorialists would consider sending their best work to these journals.

The four hall of shamers clearly found a way to repel papers in Combinatorics, whether by exhibiting an explicit bias, not having a combinatorialist on the editorial board, never encouraging best people in the area to submit, or using random people to give “quick opinions” on work far away from their area of expertise.

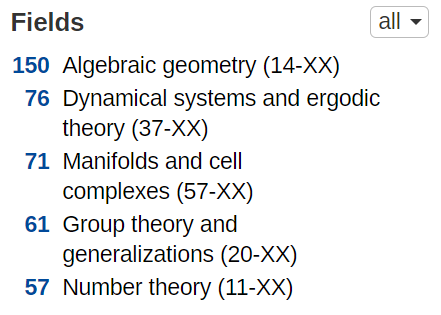

Most likely, there are several “grandfathered areas” in each journal, so with the enormous growth of submissions there is simply no room for other areas. Here is a breakdown of the top five areas in Publ. Math. IHES, helpfully compiled by ZbMATH (out of 528, remember?):

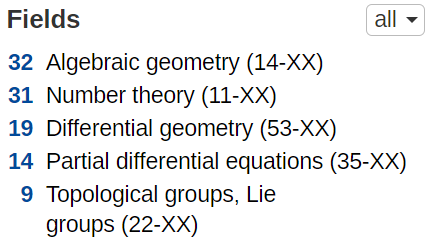

Of course, for the CJM, the whole “grandfathered areas” reasoning does not apply. Here is their breakdown of the top five areas (out of 93). See any similarities? Looks like this is a distribution of areas that the editors think are “very very important”:

When 2/3 of your papers are in just two areas, “spanning the range of mathematics” this journal is not. Of course, it really doesn’t matter how the four hall of shamers managed to achieve their perfect record for so many years — the results speak for themselves.

What should you do about it?

Not much, obviously, unless you are an editor in either of these four journals. Please don’t boycott them — it’s counterproductive and they are already boycotting you. If you work in Combinatorics, you should consider submitting your best work there, especially if you have tenure and have nothing to lose by waiting. This was the advice I gave vis-à-vie the Annals and it still applies.

But perhaps you can also shame these journals. This was also my advice on MDPI Mathematics. Here some strategy is useful, so perhaps do this. Any time you are asked for a referee report or for a quick opinion, ask the editor: Does your journal have a bias against Combinatorics? If they want your help they will say “No”. If you write a positive opinion or a report, follow up and ask if the paper is accepted. If they say “No”, ask if they still believe the journal has no bias. Aim to exhaust them!

More broadly, tell everyone you know that these four journals have an anti-Combinatorics bias. As I quoted before, Noga Alon thinks that “mathematics should be considered as one unit“. Well, as long as these journals don’t publish in Combinatorics, I will continue to disagree, and so should you. Finally, if you know someone on the editorial board of these four journals, please send them a link to this blog post and ask to write a comment. We can all use some explanation…

Innovation anxiety

I am on record of liking the status quo of math publishing. It’s very far from ideal as I repeatedly discuss on this blog, see e.g. my posts on the elitism, the invited issues, the non-free aspect of it in the electronic era, and especially the pay-to-publish corruption. But overall it’s ok. I give it a B+. It took us about two centuries to get where we are now. It may take us awhile to get to an A.

Given that there is room for improvement, it’s unsurprising that some people make an effort. The problem is that their efforts be moving us in the wrong direction. I am talking specifically about two ideas that frequently come up by people with best intensions: abolishing peer review and anonymizing the author’s name at the review stage. The former is radical, detrimental to our well being and unlikely to take hold in the near future. The second is already here and is simply misguided.

Before I take on both issues, let me take a bit of a rhetorical detour to make a rather obvious point. I will be quick, I promise!

Don’t steal!

Well, this is obvious, right? But why not? Let’s set all moral and legal issues aside and discuss it as adults. Why should a person X be upset if Y stole an object A from Z? Especially if X doesn’t know either Y or Z, and doesn’t really care who A should belong to. Ah, I see you really don’t want to engage with the issue — just like me you already know that this is appalling (and criminal, obviously).

However, if you look objectively at the society we live in, there is clearly some gray area. Indeed, some people think that taxation is a form of theft (“taking money by force”, you see). Millions of people think that illegally downloading movies is not stealing. My university administration thinks stealing my time making me fill all kinds of forms is totally kosher. The country where I grew up in was very proud about the many ways it stole my parents’ rights for liberty and the pursuit of happiness (so that they could keep their lives). The very same country thinks it’s ok to invade and steal territory from a neighboring country. Apparently many people in the world are ok with this (as in “not my problem”). Not comparing any of these, just challenging the “isn’t it obvious” premise.

Let me give a purely American answer to the “why not” question. Not the most interesting or innovative argument perhaps, but most relevant to the peer review discussion. Back in September 1789, Thomas Jefferson was worried about the constitutional precommitment. Why not, he wondered, have a revolution every 19 years, as a way not to burden future generations with rigid ideas from the past?

In February 1790, James Madison painted a grim picture of what would happen: “most of the rights of property would become absolutely defunct and the most violent struggles be generated” between property haves and have-nots, making remedy worse than the disease. In particular, allowing theft would be detrimental to continuing peaceful existence of the community (duh!).

In summary: a fairly minor change in the core part of the moral code can lead to drastic consequences.

Everyone hates peer review!

Indeed, I don’t know anyone who succeeded in academia without a great deal of frustration over the referee reports, many baseless rejections from the journals, or without having to spend many hours (days, weeks) writing their own referee reports. It’s all part of the job. Not the best part. The part well hidden from outside observers who think that professors mostly teach or emulate a drug cartel otherwise.

Well, the help is on the way! Every now and then somebody notably comes along and proposes to abolish the whole thing. Here is one, two, three just in the last few years. Enough? I guess not. Here is the most recent one, by Adam Mastroianni, twitted by Marc Andreessen to his 1.1 million followers.

This is all laughable, right? Well, hold on. Over the past two weeks I spoke to several well known people who think that abolishing peer review would make the community more equitable and would likely foster the innovation. So let’s address these objections seriously, point by point, straight from Mastroianni’s article.

(1) “If scientists cared a lot about peer review, when their papers got reviewed and rejected, they would listen to the feedback, do more experiments, rewrite the paper, etc. Instead, they usually just submit the same paper to another journal.” Huh? The same level journal? I wish…

(2) “Nobody cares to find out what the reviewers said or how the authors edited their paper in response” Oh yes, they do! Thus multiple rounds of review, sometimes over several years. Thus a lot of frustration. Thus occasional rejections after many rounds if the issue turns out non-fixable. That’s the point.

(3) “Scientists take unreviewed work seriously without thinking twice.” Sure, why not? Especially if they can understand the details. Occasionally they give well known people benefit of the doubt, at least for awhile. But then they email you and ask “Is this paper ok? Why isn’t it published yet? Are there any problems with the proof?” Or sometimes some real scrutiny happens outside of the peer review.

(4) “A little bit of vetting is better than none at all, right? I say: no way.” Huh? In math this is plainly ridiculous, but the author is moving in another direction. He supports this outrageous claim by saying that in biomedical sciences the peer review “fools people into thinking they’re safe when they’re not. That’s what our current system of peer review does, and it’s dangerous.” Uhm. So apparently Adam Mastroianni thinks if you can’t get 100% certainty, it’s better to have none. I feel like I’ve heard the same sentiment form my anti-masking relatives.

Obviously, I wouldn’t know and honestly couldn’t care less about how biomedical academics do research. Simply put, I trust experts in other fields and don’t think I know better than them what they do, should do or shouldn’t do. Mastroianni uses “nobody” 11 times in his blog post — must be great to have such a vast knowledge of everyone’s behavior. In any event, I do know that modern medical advances are nothing short of spectacular overall. Sounds like their system works really well, so maybe let them be…

The author concludes by arguing that it’s so much better to just post papers on the arXiv. He did that with one paper, put some jokes in it and people wrote him nice emails. We are all so happy for you, Adam! But wait, who says you can’t do this with all your papers in parallel with journal submissions? That’s what everyone in math does, at least the arXiv part. And if the journals where you publish don’t allow you to do that, that’s a problem with these specific journals, not with the whole peer review.

As for the jokes — I guess I am a mini-expert. Many of my papers have at least one joke. Some are obscure. Some are not funny. Some are both. After all, “what’s life without whimsy“? The journals tend to be ok with them, although some make me work for it. For example, in this recent paper, the referee asked me to specifically explain in the acknowledgements why am I thankful to Jane Austen. So I did as requested — it was an inspiration behind the first sentence (it’s on my long list of starters in my previous blog post). Anyway, you can do this, Adam! I believe in you!

Everyone needs peer review!

Let’s try to imagine now what would happen if the peer review is abolished. I know, this is obvious. But let’s game it out, post-apocaliptic style.

(1) All papers will be posted on the arXiv. In a few curious cases an informal discussion will emerge, like this one about this recent proof of the four color theorem. Most paper will be ignored just like they are ignored now.

(2) Without a neutral vetting process the journals will turn to publishing “who you know”, meaning the best known and best connected people in the area as “safe bets” whose work was repeatedly peer reviewed in the past. Junior mathematicians will have no other way to get published in leading journals without collaboration (i.e. writing “joint papers”) with top people in the area.

(3) Knowing that their papers won’t be refereed, people will start making shortcuts in their arguments. Soon enough some fraction will turn up unsalvageable incorrect. Embarrassments like the ones discussed in this page will become a common occurrence. Eventually the Atiyah-style proofs of famous theorems will become widespread confusing anyone and everyone.

(4) Granting agencies will start giving grants only to the best known people in the area who have most papers in best known journals (if you can peer review papers, you can’t expect to peer review grant proposals, right?) Eventually they will just stop, opting to give more money to best universities and institutions, in effect outsourcing their work.

(5) Universities will eventually abolish tenure as we know it, because if anyone is free to work on whatever they want without real rewards or accountability, what’s the point of tenure protection? When there are no objective standards, in the university hiring the letters will play the ultimate role along with many biases and random preferences by the hiring committees.

(6) People who work in deeper areas will be spending an extraordinary amount of time reading and verifying earlier papers in the area. Faced with these difficulties graduate students will stay away from such areas opting for more shallow areas. Eventually these areas will diminish to the point of near-extinsion. If you think this is unlikely, look into post-1980 history of finite group theory.

(7) In shallow areas, junior mathematicians will become increasingly more innovative to avoid reading older literature, but rather try to come up with a completely new question or a new theory which can be at least partially resolved on 10 pages. They will start running unrefereed competitive conferences where they will exhibit their little papers as works of modern art. The whole of math will become subjective and susceptible to fashion trends, not unlike some parts of theoretical computer science (TCS).

(8) Eventually people in other fields will start saying that math is trivial and useless, that everything they do can be done by an advanced high schooler in 15 min. We’ve seen this all before, think candid comments by Richard Feynman, or these uneducated proclamations by this blog’s old villain Amy Wax. In regards to combinatorics, such views were prevalent until relatively recently, see my “What is combinatorics” with some truly disparaging quotations, and this interview by László Lovász. Soon after, everyone (physics, economics, engineering, etc.) will start developing their own kind of math, which will be the end of the whole field as we know it.

…

(100) In the distant future, after the human civilization dies and rises up again, historians will look at the ruins of this civilization and wonder what happened? They will never learn that’s it’s all started with Adam Mastroianni when he proclaimed that “science must be free“.

Less catastrophic scenarios

If abolishing peer review does seem a little farfetched, consider the following less drastic measures to change or “improve” peer review.

(i) Say, you allow simultaneous submissions to multiple journals, whichever accepts first gets the paper. Currently, the waiting time is terribly long, so one can argue this would be an improvement. In support of this idea, one can argue that in journalism pitching a story to multiple editors is routine, that job applications are concurrent to all universities, etc. In fact, there is even an algorithm to resolve these kind of situations successfully. Let’s game this out this fantasy.

The first thing that would happen is that journals would be overwhelmed with submissions. The referees are already hard to find. After the change, they would start refusing all requests since they would also be overwhelmed with them and it’s unclear if the report would even be useful. The editors would refuse all but a few selected papers from leading mathematicians. Chat rooms would emerge in the style “who is refereeing which paper” (cf. PubPeer) to either collaborate or at least not make redundant effort. But since it’s hard to trust anonymous claims “I checked and there are no issues with Lemma 2 in that paper” (could that be the author?), these chats will either show real names thus leading to other complications (see below), or cease to exist.

Eventually the publishers will start asking for a signed official copyright transfer “conditional on acceptance” (some already do that), and those in violation will be hit with lawsuits. Universities will change their faculty code of conduct to include such copyright violations as a cause for dismissal, including tenure removal. That’s when the practice will stop and be back to normal, at great cost obviously.

(ii) Non-anonymizing referees is another perennial idea. Wouldn’t it be great if the referees get some credit for all the work that they do (so they can list it on their CVs). Even better if their referee report is available to the general public to read and scrutinize, etc. Win-win-win, right?

No, of course not. Many specialized sub-areas are small so it is hard to find a referee. For the authors, it’s relatively easy to guess who the referees are, at least if you have some experience. But there is still this crucial ambiguity as in “you have a guess but you don’t know for sure” which helps maintain friendship or at least collegiality with those who have written a negative referee report. You take away this ambiguity, and everyone will start refusing refereeing requests. Refereeing is hard already, there is really no need to risk collegial relationships as a result, especially in you are both going to be working the area for years or even decades to come.

(iii) Let’s pay the referees! This is similar but different from (ii). Think about it — the referees are hard to find, so we need to reward them. Everyone know that when you pay for something, everyone takes this more seriously, right? Ugh. I guess I have some new for you…

Think it over. You got a technical 30 page paper to referee. How much would you want to get paid? You start doing a mental calculation. Say, at a very modest $100/hr it would take you maybe 10-20 hours to write a thorough referee report. That’s $1-2K. Some people suggest $50/hr but that was before the current inflation. While I do my own share of refereeing, personally, I would charge more per hour as I can get paid better doing something else (say, teach our Summer school). For a traditional journal to pay this kind of money per paper is simply insane. Their budgets are are relatively small, let me spare you the details.

Now, who can afford that kind of money? Right — we are back to the open access journals who would pass the cost to the authors in the form of an APC. That’s when the story turn from bad to awful. For that kind of money the journals would want a positive referee report since rejected authors don’t pay. If you are not willing to play ball and give them a positive report, they will stop inviting you to referee, leading to more even corruption these journals have in the form of pay-to-publish.

You can probably imagine that this won’t end well. Just talk to medical or biological scientists who grudgingly pays to Nature or Science about 3K from their grants (which are much larger than ours). The pay because they have to, of course, and if they bulk they might not get a new grant setting back their career.

Double blind refereeing

In math, this means that the authors’ names are hidden from referees to avoid biases. The names are visible to the editors, obviously, to prevent “please referee your own paper” requests. The authors are allowed to post their papers on their websites or the arXiv, where it could be easily found by the title, so they don’t suffer from anxieties about their career or competitive pressures.

Now, in contrast with other “let’s improve the peer review” ideas, this is already happening. In other fields this has been happening for years. Closer to home, conferences in TCS have long resisted going double blind, but recently FOCS 2022, SODA 2023 and STOC 2023 all made the switch. Apparently they found Boaz Barak’s arguments unpersuasive. Well, good to know.

Even closer to home, a leading journal in my own area, Combinatorial Theory, turned double blind. This is not a happy turn of event, at least not from my perspective. I published 11 papers in JCTA, before the editorial board broke off and started CT. I have one paper accepted at CT which had to undergo the new double blind process. In total, this is 3 times as many as any other journal where I published. This was by far my favorite math journal.

Let’s hear from the journal why they did it (original emphasis):

The philosophy behind doubly anonymous refereeing is to reduce the effect of initial impressions and biases that may come from knowing the identity of authors. Our goal is to work together as a combinatorics community to select the most impactful, interesting, and well written mathematical papers within the scope of Combinatorial Theory.

Oh, sure. Terrific goal. I did not know my area has a bias problem (especially compared to many other areas), but of course how would I know?

Now, surely the journal didn’t think this change would be free? The editors must have compared pluses and minuses, and decided that on balance the benefits outweigh the cost, right? The journal is mum on that. If any serious discussion was conducted (as I was told), there is no public record of it. Here is what the journal says how the change is implemented:

As a referee, you are not disqualified to evaluate a paper if you think you know an author’s identity (unless you have a conflict of interest, such as being the author’s advisor or student). The journal asks you not to do additional research to identify the authors.

Right. So let me try to understand this. The referee is asked to make a decision whether to spend upwards of 10-20 hours on the basis of the first impression of the paper and without knowledge of the authors’ identity. They are asked not to google the authors’ names, but are ok if you do because they can’t enforce this ethical guideline anyway. So let’s think this over.

Double take on double blind

(1) The idea is so old in other sciences, there is plenty of research on its relative benefits. See e.g. here, there or there. From my cursory reading, it seems, there is a clear evidence of a persistent bias based on the reputation of educational institution. Other biases as well, to a lesser degree. This is beyond unfortunate. Collectively, we have to do better.

(2) Peer reviews have very different forms in different sciences. What works in some would not necessarily would work in others. For example, TCS conferences never really had a proper refereeing process. The referees are given 3 weeks to write an opinion of the paper based on the first 10 pages. They can read the proofs beyond the 10 pages, but don’t have to. They write “honest” opinions to the program committee (invisible to the authors) and whatever they think is “helpful” to the authors. Those of you outside of TCS can’t even imagine the quality and biases of these fully anonymous opinions. In recent years, the top conferences introduced the rebuttal stage which is probably helpful to avoid random superficial nitpicking at lengthy technical arguments.

In this large scale superficial setting with rapid turnover, the double blind refereeing is probably doing more good than bad by helping avoid biases. The authors who want to remain anonymous can simply not make their papers available for about three months between the submission and the decision dates. The conference submission date is a solid date stamp for them to stake the result, and three months are unlikely to make major change to their career prospects. OTOH, the authors who want to stake their reputation on the validity of their technical arguments (which are unlikely to be fully read by the referees) can put their papers on the arXiv. All in all, this seems reasonable and workable.

(3) The journal process is quite a bit longer than the conference, naturally. For example, our forthcoming CT paper was submitted on July 2, 2021 and accepted on November 3, 2022. That’s 16 months, exactly 490 days, or about 20 days per page, including the references. This is all completely normal and is nobody’s fault (definitely not the handling editor’s). In the meantime my junior coauthor applied for a job, was interviewed, got an offer, accepted and started a TT job. For this alone, it never crossed our mind not to put the paper on the arXiv right away.

Now, I have no doubt that the referee googled our paper simply because in our arguments we frequently refer our previous papers on the subject for which this was a sequel (er… actually we refer to some [CPP21a] and [CPP21b] papers). In such cases, if the referee knows that the paper under review is written by the same authors there is clearly more confidence that we are aware of the intricate parts of our own technical details from the previous paper. That’s a good thing.

Another good thing to have is the knowledge that our paper is surviving public scrutiny. Whenever issues arise we fix them, whenever some conjecture are proved or refuted, we update the paper. That’s a normal academic behavior no matter what Adam Mastroianni says. Our reputation and integrity is all we have, and one should make every effort to maintain it. But then the referee who has been procrastinating for a year can (and probably should) compare with the updated version. It’s the right thing to do.

Who wants to hide their name?

Now that I offered you some reasons why looking for paper authors is a good thing (at least in some cases), let’s look for negatives. Under what circumstances might the authors prefer to stay anonymous and not make their paper public on the arXiv?

(a) Junior researchers who are afraid their low status can reduce their chances to get accepted. Right, like graduate students. This will hurt them both mathematically and job wise. This is probably my biggest worry that CT is encouraging more such cases.

(b) Serial submitters and self-plagiarists. Some people write many hundreds of papers. They will definitely benefit from anonymity. The editors know who they are and that their “average paper” has few if any citations outside of self-citations. But they are in a bind — they have to be neutral arbiters and judge each new paper independently of the past. Who knows, maybe this new submission is really good? The referees have no such obligation. On the contrary, they are explicitly asked to make a judgement. But if they have no name to judge the paper by, what are they supposed to do?

Now, this whole anonymity thing is unlikely to help serial submitters at CT, assuming that the journal standards remain high. Their papers will be rejected and they will move on, submitting down the line until they find an obscure enough journal that will bite. If other, somewhat less selective journals adopt the double blind review practice, this could improve their chances, however.

For CT, the difference is that in the anonymous case the referees (and the editors) will spend quite a bit more time per paper. For example, when I know that the author is a junior researcher from a university with limited access to modern literature and senior experts, I go out of my way to write a detailed referee report to help the authors, suggest some literature they are missing or potential directions for their study. If this is a serial submitter, I don’t. What’s the point? I’ve tried this a few times, and got the very same paper from another journal next week. They wouldn’t even fix the typos that I pointed out, as if saying “who has the time for that?” This is where Mastroianni is right: why would their 234-th paper be any different from 233-rd?

(c) Cranks, fraudsters and scammers. The anonymity is their defense mechanism. Say, you google the author and it’s Dănuț Marcu, a serial plagiarist of 400+ math papers. Then you look for a paper he is plagiarizing from and if successful making efforts to ban him from your journal. But if the author is anonymous, you try to referee. There is a very good chance you will accept since he used to plagiarize good but old and somewhat obscure papers. So you see — the author’s identity matters!

Same with the occasional zero-knowledge (ZK) aspirational provers whom I profiled at the end of this blog post. If you are an expert in the area and know of somebody who has tried for years to solve a major conjecture producing one false or incomplete solution after another, what do you do when you see a new attempt? Now compare with what you do if this paper is by anonymous? Are you going to spend the same effort effort working out details of both papers? Wouldn’t in the case of a ZK prover you stop when you find a mistake in the proof of Lemma 2, while in the case of a genuine new effort try to work it out?

In summary: as I explained in my post above, it’s the right thing to do to judge people by their past work and their academic integrity. When authors are anonymous and cannot be found, the losers are the most vulnerable, while the winners are the nefarious characters. Those who do post their work on the arXiv come out about even.

Small changes can make a major difference

If you are still reading, you probably think I am completely 100% opposed to changes in peer review. That’s not true. I am only opposed to large changes. The stakes are just too high. We’ve been doing peer review for a long time. Over the decades we found a workable model. As I tried to explain above, even modest changes can be detrimental.

On the other hand, very small changes can be helpful if implemented gradually and slowly. This is what TCS did with their double blind review and their rebuttal process. They started experimenting with lesser known and low stakes conferences, and improved the process over the years. Eventually they worked out the kinks like COI and implemented the changes at top conferences. If you had to make changes, why would you start with a top journal in the area??

Let me give one more example of a well meaning but ultimately misguided effort to make a change. My former Lt. Governor Gavin Newsom once decided that MOOCs are the answer to education foes and is a way for CA to start giving $10K Bachelor’s degrees. The thinking was — let’s make a major change (a disruption!) to the old technology (teaching) in the style of Google, Uber and Theranos!

Lo and behold, California spent millions and went nowhere. Our collective teaching experience during COVID shows that this was not an accident or mismanagement. My current Governor, the very same Gavin Newsom, dropped this idea like a rock, limiting it to cosmetic changes. Note that this isn’t to say that online education is hopeless. In fact, see this old blog post where I offer some suggestions.

My modest proposal

The following suggestions are limited to pure math. Other fields and sciences are much too foreign for me to judge.

(i) Introduce a very clearly defined quick opinion window of about 3-4 weeks. The referees asked for quick opinions can either decline or agree within 48 hours. It will only take them about 10-20 minutes to make an opinion based on the introduction, so give them a week to respond with 1-2 paragraphs. Collect 2-3 quick opinions. If as an editor you feel you need more, you are probably biased against the paper or the area, and are fishing for a negative opinion to have “quick reject“. This is a bit similar to the way Nature, Science, etc. deal with their submissions.

(ii) Make quick opinion requests anonymous. Request the reviewers to assess how the paper fits the journal (better, worse, on point, best submitted to another area to journals X, Y or Z, etc.) Adopt the practice of returning these opinions to the authors. Proceed to the second stage by mutual agreement. This is a bit similar to TCS which has authors use the feedback from the conference makes decisions about the journal or other conference submissions.

(iii) If the paper is rejected or withdrawn after the quick opinion stage, adopt the practice to send quick opinions to another journal where the paper is resubmitted. Don’t communicate the names of the reviewers — if the new editor has no trust in the first editor’s qualifications, let them collect their own quick opinions. This would protect the reviewers from their names going to multiple journals thus making their names semi-public.

(iv) The most selective journals should require that the paper not be available on the web during the quick opinion stage, and violators be rejected without review. Anonymous for one — anonymous for all! The three week long delay is unlikely to hurt anybody, and the journal submission email confirmation should serve as a solid certificate of a priority if necessary. Some people will try to game the system like give a talk with the same title as the paper or write a blog post. Then it’s on editor’s discretion what to do.

(v) In the second (actual review) stage, the referees should get papers with authors’ names and proceed per usual practice.

Happy New Year everyone!

What to publish?

This might seem like a strange question. A snarky answer would be “everything!” But no, not really everything. Not all math deserves to be published, just like not all math needs to be done. Making this judgement is difficult and goes against the all too welcoming nature of the field. But if you want to succeed in math as a profession, you need to make some choices. This is a blog post about the choices we make and the choices we ought to make.

Bedtime questions

Suppose you tried to solve a major open problem. You failed. A lot of time is wasted. Maybe it’s false, after all, who knows. You are no longer confident. But you did manage to compute some nice examples, which can be turned into a mediocre little paper. Should you write it and post it on the arXiv? Should you submit it to a third rate journal? A mediocre paper is still a consolation prize, right? Better than nothing, no?

Or, perhaps, it is better not to show how little you proved? Wouldn’t people judge you as an “average” of all published papers on your CV? Wouldn’t this paper have negative impact on your job search next year? Maybe it’s better to just keep it to yourself for now and hope you can make a breakthrough next year? Or some day?

But wait, other people in the area have a lot more papers. Some are also going to be on a job market next year. Shouldn’t you try to catch up and publish every little thing you have? People at other universities do look at the numbers, right? Maybe nobody will notice this little paper. If you have more stuff done by then it will get lost in the middle of my CV, but it will help get the numbers up. Aren’t you clever or what?

Oh, wait, maybe not! You do have to send your CV to your letter writers. They will look at all your papers. How would they react to a mediocre paper? Will they judge you badly? What in the world should you do?!?

Well, obviously I don’t have one simple answer to that. But I do have some thoughts. And this quote from a famous 200 year old Russian play about people who really cared how they are perceived:

Chatsky: I wonder who the judges are! […]

Famusov: My goodness! What will countess Marya Aleksevna say to this?

[Alexander Griboyedov, Woe from Wit, 1823, abridged.]

You would think our society had advanced at least a little…

Who are the champions?

If we want to find the answers to our questions, it’s worth looking at the leaders of the field. Let’s take a few steps back and simply ask — Who are the best mathematicians? Ridiculous questions always get many ridiculous answers, so here is a random ranking by some internet person: Newton, Archimedes, Gauss, Euler, etc. Well, ok — these are all pretty dead and probably never had to deal with a bad referee report (I am assuming).

Here is another random list, from a well named website research.com. Lots of living people finally: Barry Simon, Noga Alon, Gilbert Laporte, S.T. Yau, etc. Sure, why not? But consider this recent entrant: Ravi P. Agarwal is at number 20, comfortably ahead of Paul Erdős at number 25. Uhm, why?

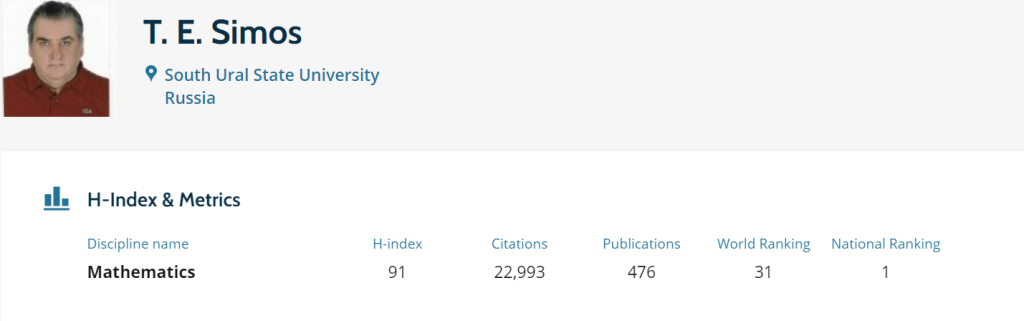

Or consider Theodore E. Simos who is apparently the “Best Russian Mathematician” according to research.com, and number 31 in the world ranking:

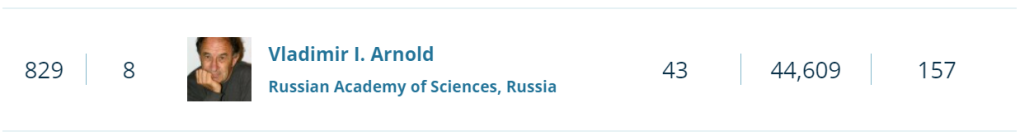

Uhm, I know MANY Russian mathematicians. Some of them are truly excellent. Who is this famous Simos I never heard of? How come he is so far ahead of Vladimir Arnold who is at number 829 on the list?

Of course, you already guessed the answer. It’s obvious from the pictures above. In their infinite wisdom, research.com judges mathematicians by the weighted average of the numbers of papers and citations. Arnold is doing well on citations, but published so little! Only 157 papers!

Numbers rule the world

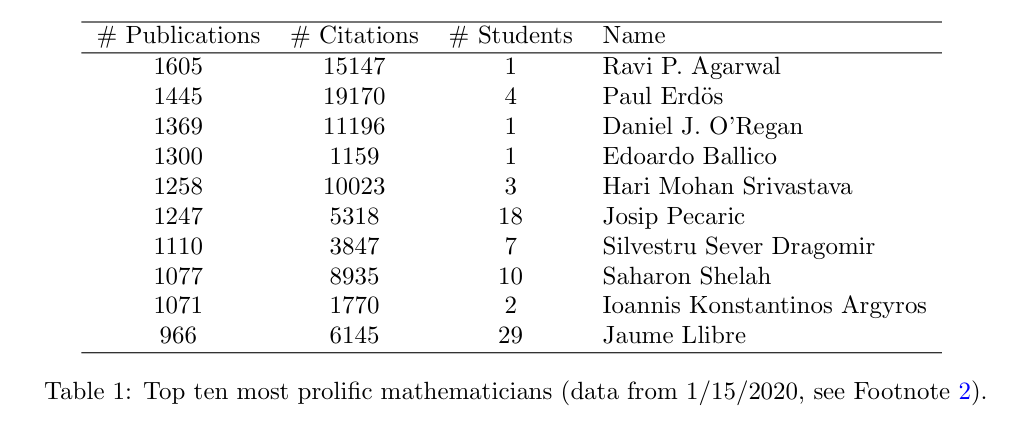

To dig a little deeper into this citation phenomenon, take a look at the following curious table from a recent article “Extremal mathematicians“ by Carlos Alfaro:

If you’ve been in the field for awhile, you are probably staring at this in disbelief. How do you physically write so many papers?? Is this even true???

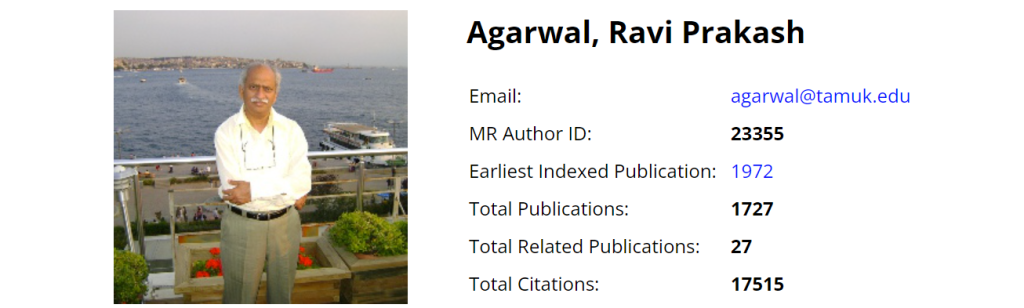

Yes, you know how Paul Erdős did it — he was amazing and he had a lot of coauthors. No, you don’t know how Saharon Shelah does it. But he is a legend, and you are ok with that. But here we meet again our hero Ravi P. Agarwal, the only human mathematician with more papers than Erdős. Who is he? Here is what the MathSciNet says:

Note that Ravi is still going strong — in less than 3 years he added 125 papers. Of these 1727 papers, 645 are with his favorite coauthor Donal O’Regan, number 3 on the list above. Huh? What is going on??

What’s in a number?

If the number of papers is what’s causing you to worry, let’s talk about it. Yes, there is also number of citations, the h-index (which boils down to the number of citations anyway), and maybe other awful measurements of research productivity. But the number of papers is what you have a total control over. So here are a few strategies how you can inflate the number that I learned from a close examination of publishing practices of some of the “extremal mathematicians”. They are best employed in combination:

(a) Form a clique. Over the years build a group of 5-8 close collaborators. Keep writing papers in different subsets of 3-5 of them. This is easier to do since each gets to have many papers while writing only a fraction. Make sure each papers cites heavily all other subsets from the clique. To an untrained eye of an editor, these would appear to be experts who are able to referee the paper.

(b) Form a cartel. This is a strong for of a clique. Invent an area and call yourselves collaborative research in that area. Make up a technical name, something like “analytic and algebraic topology

of locally Euclidean metrizations of infinitely differentiable Riemannian manifolds“. Apply for collaborative grants, organize conferences, publish conference proceedings, publish monographs, start your own journal. From outside it looks like a normal research activity, and who is to judge after all?

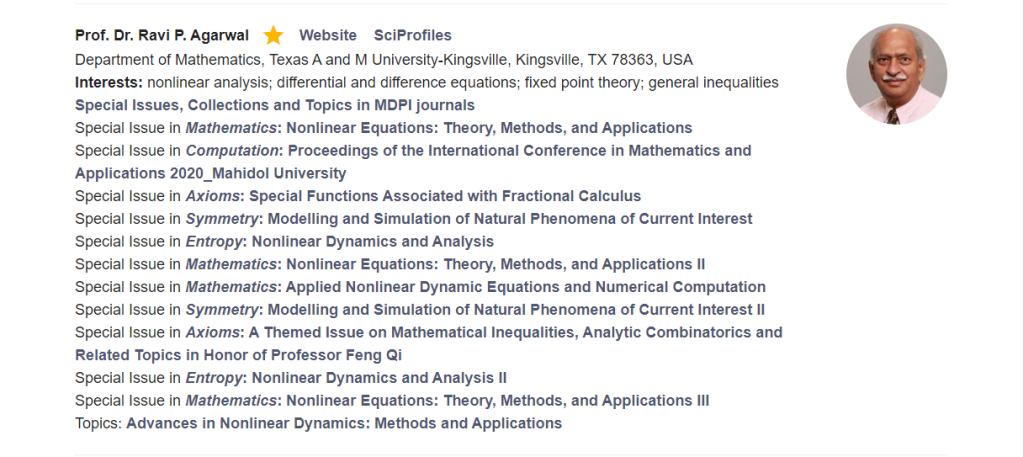

(c) Publish in little known, not very selective or shady journals. For example, Ravi P. Agarwal published 26 papers in Mathematics (MDPI Journal) that I discussed at length in this blog post. Note aside: since Mathematics is not indexed by the MathSciNet, the numbers above undercount his total productivity.

(d) Organize special issues with these journals. For example, here is a list of 11(!) special issues Agarwal served as a special editor with MDPI. Note the breadth of the collection:

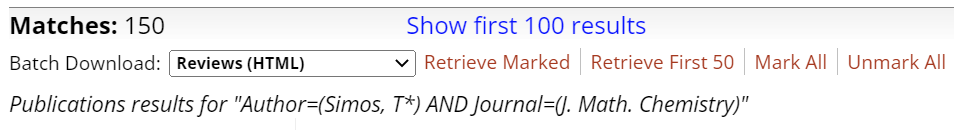

(e) Become an editor of an established but not well managed journal and publish a lot there with all your collaborators. For example, T.E. Simos has a remarkable record of 150 (!) papers in the Journal of Mathematical Chemistry, where he is an editor. I feel that Springer should be ashamed of such a poor oversight of this journal, but nothing can be done I am sure since the journal has a healthy 2.413 impact factor, and Simos’s hard work surely contributed to its rise from just 1.056 in 2015. OTOH, maybe somebody can convince the MathSciNet to stop indexing this journal?

Let me emphasize that nothing on the list above is unethical, at least in a way the AMS or the NAS define these (as do most universities I think). The difference is quantitative, not qualitative. So these should not be conflated with various paper mill practices such as those described in this article by Anna Abalkina.

Disclaimer: I strongly recommend you use none of these strategies. They are abusing the system and have detrimental long term effects to both your area and your reputation.

Zero-knowledge publishing

In mathematics, there is another method of publishing that I want to describe. This one is borderline unethical at best, so I will refrain from naming names. You figure it out on your own!

Imagine you want to prove a major open problem in the area. More precisely, you want to become famous for doing that without actually getting the proof. In math, you can’t get there without publishing your “proof” in a leading area journal, better yet one of the top journals in mathematics. And if you do, it’s a good bet the referees will examine your proof very carefully. Sounds like a fail-proof system, right?

Think again! Here is an ingenuous strategy that I recently happen to learn. The strategy is modeled on the celebrated zero-knowledge proof technique, although the author I am thinking of might not be aware of that.

For simplicity, let’s say the open problem is “A=? Z”. Here is what you do, step by step.

- You come up with a large set of problems P,Q,R,S,T,U,V,W,X,Y which are all equivalent to Z. You then start a well publicized paper factory proving P=Q, W=X, X=Z, Q=Z, etc. All these papers are correct and give a good vibe of somebody who is working hard on the A=?Z problem. Make sure you have a lot of famous coauthors on these papers to further establish your credibility. In haste, make the papers barely readable so that the referees don’t find any major mistakes but get exhausted by the end.

- Make another list of problems B,C,D,E,F,G which are equivalent to A. Keep these equivalences secret. Start writing new papers proving B=T, D=Y, E=X, etc. Write them all in a style similar to previous list: cumbersome, some missing details, errors in minor arguments, etc. No famous people as coauthors. Do try to involve many grad students and coauthors to generate good will (such a great mentor!) They will all be incorrect, but none of them would raise a flag since by themselves they don’t actually prove A=Z.

- Populate the arXiv with all these papers and submit them to different reputable journals in the area. Some referees or random readers will find mistakes, so you fix one incomprehensible detail with another and resubmit. If crucial problems in one paper persist, just drop it and keep going through the motions on all other papers. Take your time.

- Eventually one of these will get accepted because the referees are human and they get tired. They will just assume that the paper they are handling is just like the papers on the first list – clumsily written but ultimately correct. And who wants to drag things down over some random reduction — the young researcher’s career is on the line. Or perhaps, the referee is a coauthor of some of the paper on the first list – in this case they are already conditioned to believe the claims because that’s what they learned from the experience on the joint paper.

- As soon as any paper from the second list is accepted, say E=X, take off the shelf the reduction you already know and make it public with great fanfare. For example, in this case quickly announce that A=E. Combined with the E=X breakthrough, and together with X=W and W=Z previously published in the first list, you can conclude that A=Z. Send it to the Annals. What are the referees going to do? Your newest A=E is inarguable, clearly true. How clever are you to have figured out the last piece so quickly! The other papers are all complicated and confusing, they all raise questions, but somebody must have refereed them and accepted/published them. Congratulations on the solution of A=Z problem! Well done!

It might take years or even decades until the area has a consensus that one should simply ignore the erroneous E=X paper and return to “A=?Z” the status of an open problem. The Annals will refuse to publish a retraction — technically they only published a correct A=E reduction, so it’s all other journals’ fault. It will all be good again, back to normal. But soon after, new papers such as G=U and B=R start to appear, and the agony continues anew…

From math to art

Now that I (hopefully) convinced you that high numbers of publications is an achievable but ultimately futile goal, how should you judge the papers? Do they at least make a nonnegative contribution to one’s CV? The answer to the latter question is “No”. This contribution can be negative. One way to think about is by invoking the high end art market.

Any art historian would be happy to vouch that the worth of a painting hinges heavily on the identity of the artist. But why should it? If the whole purpose of a piece of art is to evoke some feelings, how does the artist figures into this formula? This is super naïve, obviously, and I am sure you all understand why. My point is that things are not so simple.

One way to see the a pattern among famous artists is to realize that they don’t just create “one off” paintings, but rather a “series”. For example, Monet famously had haystack and Rouen Cathedral series, Van Gogh had a sunflowers series, Mondrian had a distinctive style with his “tableau” and “composition” series, etc. Having a recognizable very distinctive style is important, suggesting that painting in series are valued differently than those that are not, even if they are by the same artist.

Finally, the scarcity is an issue. For example Rodin’s Thinker is one of the most recognizable sculptures in the world. So is the Celebration series by Jeff Koons. While the latter keep fetching enormous prices at auctions, the latest sale of a Thinker couldn’t get a fifth of the Yellow Balloon Dog price. It could be because balloon animals are so cool, but could also be that there are 27 Thinkers in total, all made from the same cast. OTOH, there are only 5 balloon dogs, and they all have distinctly different colors making them both instantly recognizable yet still unique. You get it now — it’s complicated…

What papers to write

There isn’t anything objective of course, but thinking of art helps. Let’s figure this out by working backward. At the end, you need to be able to give a good colloquium style talk about your work. What kid of papers should you write to give such a talk?

- You can solve a major open problem. The talk writes itself then. You discuss the background, many famous people’s attempts and partial solutions. Then state your result and give an idea of the proof. Done. No need to have a follow up or related work. Your theorem speaks for itself. This is analogous to the most famous paintings. There are no haystacks or sunflowers on that list.

- You can tell a good story. I already wrote about how to write a good story in a math paper, and this is related. You start your talk by telling what’s the state of the sub-area, what are the major open problems and how do different aspects of your work fit in the picture. Then talk about how the technology that you develop over several papers positioned you to make a major advance in the area that is your most recent work. This is analogous to the series of painting.

- You can prove something small and nice, but be an amazing lecturer. You mesmerize the audience with your eloquence. For about 5 minutes after your talk they will keep thinking this little problem you solved is the most important result in all of mathematics. This feeling will fade, but good vibes will remain. They might still hire you — such talent is rare and teaching excellence is very valuable.

That’s it. If you want to give a good job talk, there is no other way to do it. This is why writing many one-off little papers makes very little sense. A good talk is not a patchwork quilt – you can’t make it of disparate pieces. In fact, I heard some talks where people tried to do that. They always have coherence of a portrait gallery of different subjects by different artists.

Back to the bedtime questions — the answer should be easy to guess now. If your little paper fits the narrative, do write it and publish it. If it helps you tell a good story — that sounds great. People in the area will want to know that you are brave enough to make a push towards a difficult problem using the tools or results you previously developed. But if it’s a one-off thing, like you thought for some reason that you could solve a major open problem in another area — why tell anyone? If anything, this distracts from the story you want to tell about your main line of research.

How to judge other people’s papers

First, you do what you usually do. Read the paper, make a judgement on the validity and relative importance of the result. But then you supplement the judgement with what you know about the author, just like when you judge a painting.

This may seem controversial, but it’s not. We live in an era of thousands of math journals which publish in total over 130K papers a year (according to MathSciNet). The sheer amount of mathematical research is overwhelming and the expertise has fractured into tiny sub-sub-areas, many hundreds of them. Deciding if a paper is a useful contribution to the area is by definition a function of what the community thinks about the paper.

Clearly, you can’t poll all members of the community, but you can ask a couple of people (usually called referees). And you can look at how previous papers by the author had been accepted by the community. This is why in the art world they always write about recent sales: what money and what museum or private collections bought the previous paintings, etc. Let me give you some math examples.

Say, you are an editor. Somebody submits a bijective proof of a binomial identity. The paper is short but nice. Clearly publishable. But then you check previous publications and discover the author has several/many other published papers with nice bijective proofs of other binomial identities, and all of them have mostly self-citations. Then you realize that in the ocean of binomial identities you can’t even check if this work has been done before. If somebody in the future wants to use this bijection, how would they go about looking for it? What will they be googling for? If you don’t have good answers to these questions, why would you accept such a paper then?

Say, you are hiring a postdoc. You see files of two candidates in your area. Both have excellent well written research proposals. One has 15 papers, another just 5 papers. The first is all over the place, can do and solve anything. The second is studious and works towards building a theory. You only have time to read the proposals (nobody has time to read all 20 papers). You looks at the best papers of each and they are of similar quality. Who do you hire?

That depends on who you are looking for, obviously. If you are a fancy shmancy university where there are many grad students and postdocs all competing with each other, none working closely with their postdoc supervisor — probably the first one. Lots of random papers is a plus — the candidate clearly adapts well and will work with many others without need for a supervision. There is even a chance that they prove something truly important, it’s hard to say, right? Whether they get a good TT job afterwards and what kind of job would that be is really irrelevant — other postdocs will be coming in a steady flow anyway.

But if you want to have this new postdoc to work closely with a faculty at your university, someone intent on building a something valuable, so that they are able to give a nice job talk telling a good story at the end, hire the second one. They first is much too independent and will probably be unable to concentrate on anything specific. The amount of supervision tends to go less, not more, as people move up. Left to their own devices you expect from these postdocs more of the same, so the choice becomes easy.

Say, you are looking at a paper submitted to you as an editor of an obscure journal. You need a referee. Look at the previous papers by the authors and see lots of the repeated names. Maybe it’s a clique? Make sure your referees are not from this clique, completely unrelated to them in any way.

Or, say, you are looking at a paper in your area which claims to have made an important step towards resolving a major conjecture. The first thing you do is look at previous papers by the same person. Have they said the same before? Was it the same or a different approach? Have any of their papers been retracted or major mistakes found? Do they have several parallel papers which prove not exactly related results towards the same goal? If the answer is Yes, this might be a zero-knowledge publishing attempt. Do nothing. But do tell everyone in the area to ignore this author until they publish one definitive paper proving all their claims. Or not, most likely…

P.S. I realize that many well meaning journals have double blind reviews. I understand where they are coming from, but think in the case of math this is misguided. This post is already much too long for me to talk about that — some other time, perhaps.

How I chose Enumerative Combinatorics

Apologies for not writing anything for awhile. After Feb 24, the math part of the “life and math” slogan lost a bit of relevance, while the actual events were stupefying to the point when I had nothing to say about the life part. Now that the shock subsided, let me break the silence by telling an old personal story which is neither relevant to anything happening right now nor a lesson to anyone. Sometimes a story is just a story…

My field

As the readers of this blog know, I am a Combinatorialist. Not a “proud one”. Just “a combinatorialist”. To paraphrase a military slogan “there are many fields like this one, but this one is mine”. While I’ve been defending my field for years, writing about its struggles, and often defining it, it’s not because this field is more important than others. Rather, because it’s so frequently misunderstood.

In fact, I have worked in other (mostly adjacent) fields, but that was usually because I was curious. Curious what’s going on in other areas, curious if they had tools to help me with my problems. Curious if they had problems that could use my tools. I would go to seminars in other fields, read papers, travel to conferences, make friends. Occasionally this strategy paid off and I would publish something in another field. Much more often nothing ever came out of that. It was fun regardless.

Anyway, I wanted to work in combinatorics for as long as I can remember, since I was about 15 or so. There is something inherently discrete about the way I see the world, so much that having additional structure is just obstructing the view. Here is how Gian-Carlo Rota famously put it:

Combinatorics is an honest subject. […] You either have the right number or you haven’t. You get the feeling that the result you have discovered is forever, because it’s concrete. [Los Alamos Science, 1985]

I agree. Also, I really like to count. When prompted, I always say “I work in Combinatorics” even if sometimes I really don’t. But in truth, the field is much too large and not unified, so when asked to be more specific (this rarely happens) I say “Enumerative Combinatorics“. What follows is a short story of how I made the choice.

Family vacation

When I was about 18, Andrey Zelevinsky (ז״ל) introduced me and Alex Postnikov to Israel Gelfand and asked what should we be reading if we want to do combinatorics. Unlike most leading mathematicians in Russia, Gelfand had a surprisingly positive view on the subject (see e.g. his quotes here). He suggested we both read Macdonald’s book, which was translated into Russian by Zelevinsky himself just a few years earlier. The book was extremely informative but dry as a fig and left little room for creativity. I read a large chunk of it and concluded that if this is what modern combinatorics looks like, I want to have nothing to do with it. Alex had a very different impression, I think.

Next year, my extended family decided to have a vacation on a Russian “river cruise”. I remember a small passenger boat which left Moscow river terminal, navigated a succession of small rivers until it reached Volga. From there, the boat had a smooth gliding all the way to the Caspian Sea. The vacation was about three weeks of a hot Summer torture with the only relief served by mouth-watering fresh watermelons.

What made it worse, there was absolutely nothing to see. Much of the way Volga is enormously wide, sometimes as wide as the English channel. Most of the time you couldn’t even see the river banks. The cities distinguished themselves only by an assortment of Lenin statues, but were unremarkable otherwise. Volgograd was an exception with its very impressive tallest statue in Europe, roughly as tall as the Statue of Liberty when combined with its pedestal. Impressive for sure, but not worth the trip. Long story short, the whole cruise vacation was dreadfully boring.

One good book can make a difference

While most of my relatives occupied themselves by reading crime novels or playing cards, I was reading a math book, the only book I brought with me. This was Stanley’s Enumerative Combinatorics (vol. 1) whose Russian translation came out just a few months earlier. So I read it cover-to-cover, but doing only the easiest exercises just to make sure I understand what’s going on. That book changed everything…

Midway through, when I was reading about linear extensions of posets in Ch. 3 with their obvious connections to standard Young tableaux and the hook-length formula (which I already knew by then), I had an idea. From Macdonald’s book, I remembered the q-analogue of #SYT via the “charge“, a statistics introduced by Lascoux and Schützenberger to give a combinatorial interpretation of Kostka polynomials, and which works even for skew Young diagram shapes. I figured that skew shapes are generic enough, and there should be a generalization of the charge to all posets. After several long days filled with some tedious calculations by hand, I came up with both the statement and the proof of the q-analogue of the number of linear extensions.

I wrote the proof neatly in my notebook with a clear intent to publish my “remarkable discovery”, and continued reading. In Ch. 4, all of a sudden, I read the “P-partition theory” that I just invented by myself. It came with various applications and connections to other problems, and was presented so well, much nicer than I would have!

After some extreme disappointment, I learned from the notes that the P-partition theory was a large portion of Stanley’s own Ph.D. thesis, which he wrote before I was born. For a few hours, I did nothing but meditate, staring at the vast water surrounding me and ignoring my relatives who couldn’t care less what I was doing anyway. I was trying to think if there is a lesson in this fiasco.

It pays to be positive and self-assure, I suppose, in a way that only a teenager can be. That day I concluded that I am clearly doing something right, definitely smarter than everyone else even if born a little too late. More importantly, I figured that Enumerative Combinatorics done “Stanley-style” is really the right area for me…

Epilogue

I stopped thinking that I am smarter than everyone else within weeks, as soon as I learned more math. I no longer believe I was born too late. I did end up doing a lot of Enumerative Combinatorics. Much later I became Richard Stanley’s postdoc for a short time and a colleague at MIT for a long time. Even now, I continue writing papers on the numbers of linear extensions and standard Young tableaux. Occasionally, I also discuss their q-analogues (like in my most recent paper). I still care and it’s still the right area for me…

Some years later I realized that historically, the “charge” and Stanley’s q-statistics were not independent in a sense that both are generalizations of the major index by Percy MacMahon. In his revision of vol. 1, Stanley moved the P-partition theory up to Ch. 3, where it belongs IMO. In 2001, he received the Steele’s Prize for Mathematical Exposition for the book that changed everything…

Are we united in anything?

Unity here, unity there, unity shmunity is everywhere. You just can’t avoid hearing about it. Every day, no matter the subject, somebody is going to call for it. Be it in Ukraine or Canada, Taiwan or Haiti, everyone is calling for unity. President Biden in his Inaugural Address called for it eight times by my count. So did former President Bush on every recent societal issue: here, there, everywhere. So did Obama and Reagan. I am sure just about every major US politician made the same call at some point. And why not? Like the “world peace“, the unity is assumed to be a universal good, or at least an inspirational if quickly forgettable goal.

Take the Beijing Olympic Games, which proudly claims that their motto “demonstrates unity and a collective effort” towards “the goal of pursuing world unity, peace and progress”. Come again? While The New York Times isn’t buying the whole “world unity” thing and calls the games “divisive” it still thinks that “Opening Ceremony [is] in Search of Unity.” Vox is also going there, claiming that the ceremony “emphasized peace, world unity, and the people around the world who have battled the pandemic.” So it sounds to me that despite all the politics, both Vox and the Times think that this mythical unity is something valuable, right? Well, ok, good to know…